Author: Fatih Çağatay Akyön – Lead Machine Learning Engineer, OBSS |

LinkedIn: https://www.linkedin.com/in/fcakyon/

Face anti spoofing is a critical component of modern biometric security, designed to protect face recognition systems against presentation attacks such as mask spoofing, replay attacks and printed photos.

Biometric identification is one of the oldest methods of personal authentication. Passwords and keys can be obtained through espionage, stolen, forgotten, or imitated. However, imitating or losing a person’s unique biological characteristics is far more difficult. These characteristics may include fingerprints, voice, the vascular structure of the retina, and more.

As you might expect, there are also attempts to deceive biometric systems. This article focuses on how attackers try to bypass facial recognition systems by impersonating another person — and how such attempts can be detected.

What You Will Learn from This Article

- The relationship between face recognition and face spoofing

- Key terminology and evaluation metrics in face anti-spoofing

- Types of face spoofing attacks

- Application areas of face anti-spoofing methods

- Key points from selected academic studies on face anti-spoofing

- The current state of face spoofing datasets

From Face Recognition to Face Spoofing

Today’s face recognition systems demonstrate remarkable accuracy. With the emergence of large datasets and complex architectures, it has become possible to achieve recognition accuracy rates as high as 0.000001 (one error in a million). These systems are now suitable for deployment on mobile platforms.

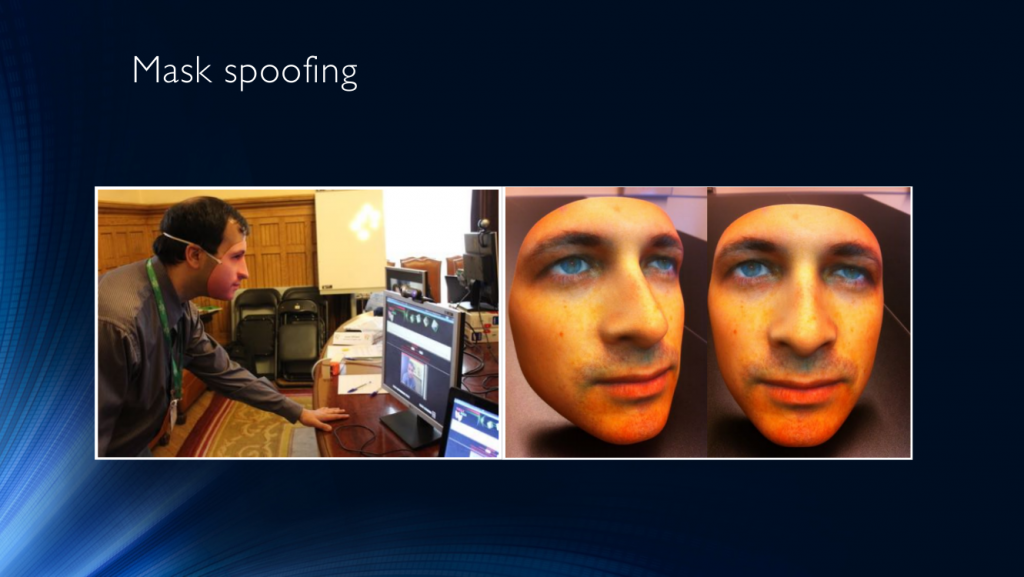

The primary obstacle preventing their widespread everyday use has been their vulnerabilities. In real-world scenarios, masks are among the most commonly used tools for impersonation. For example, as shown below, a black mask was used in a bank robbery.

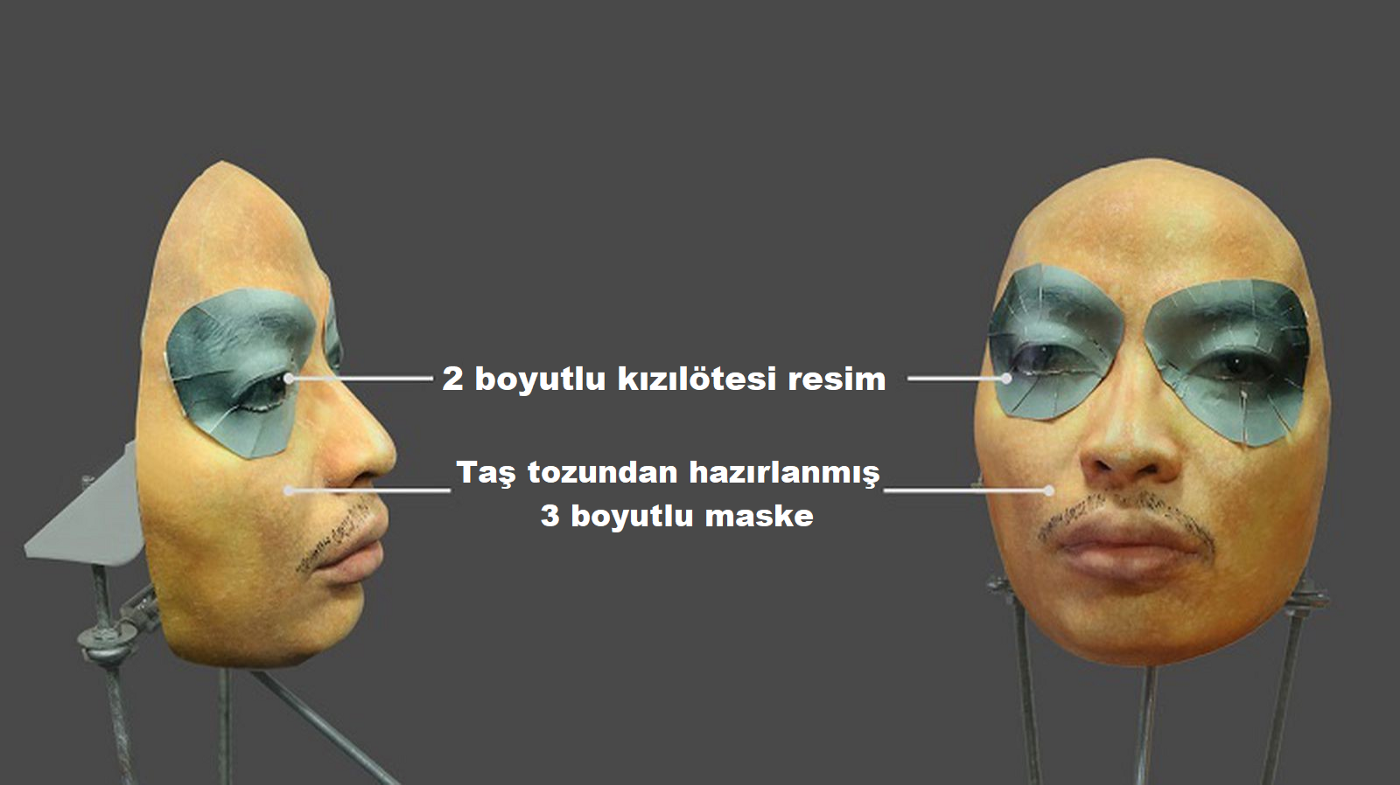

The most effective way to deceive a face recognition system is to present someone else’s face instead of your own. Masks can vary widely in quality — from a printed photograph of another person’s face to highly sophisticated heated 3D masks. They may be presented as a printed sheet, displayed on a screen, or worn directly on the face.

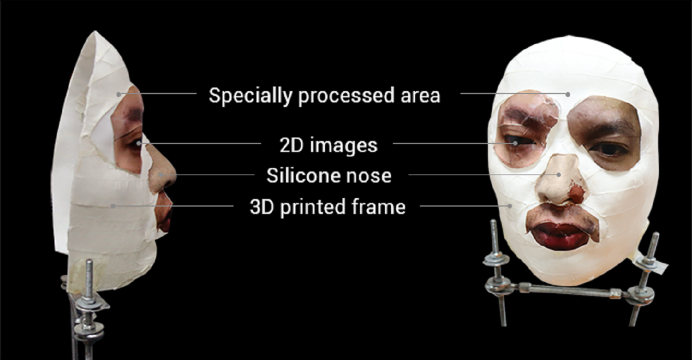

Interest in face spoofing increased significantly after attempts to bypass Samsung’s Iris Scanner and Apple’s Face ID system. In the image below, you can see the mask developed by the Vietnamese security company Bkav to bypass Face ID. The mask consists of a silicone nose, 3D-printed components, and 2D photographs. More details can be found in the related video

Source: https://www.wired.com/story/hackers-say-broke-face-id-security/

The existence of such vulnerabilities poses serious risks in domains such as banking systems or urban security infrastructures, where a successful breach could lead to significant losses.

Terminology

The field of identity/face anti-spoofing research is relatively new, and it cannot yet be said that a universally accepted terminology exists. Nevertheless, I will attempt to translate the key terms as accurately as possible:

Spoofing-attack: An attempt to deceive an identification system by presenting a fake biometric parameter (in this case, a person or a person’s face).

Anti-spoof: A set of protective measures designed to counter such deception attempts. These may be implemented as various technologies and algorithms integrated into the pipeline of an identification system.

Presentation attack: The act of presenting an image, recorded video, or similar artifact to the system in order to cause misidentification or prevent correct identification of the user.

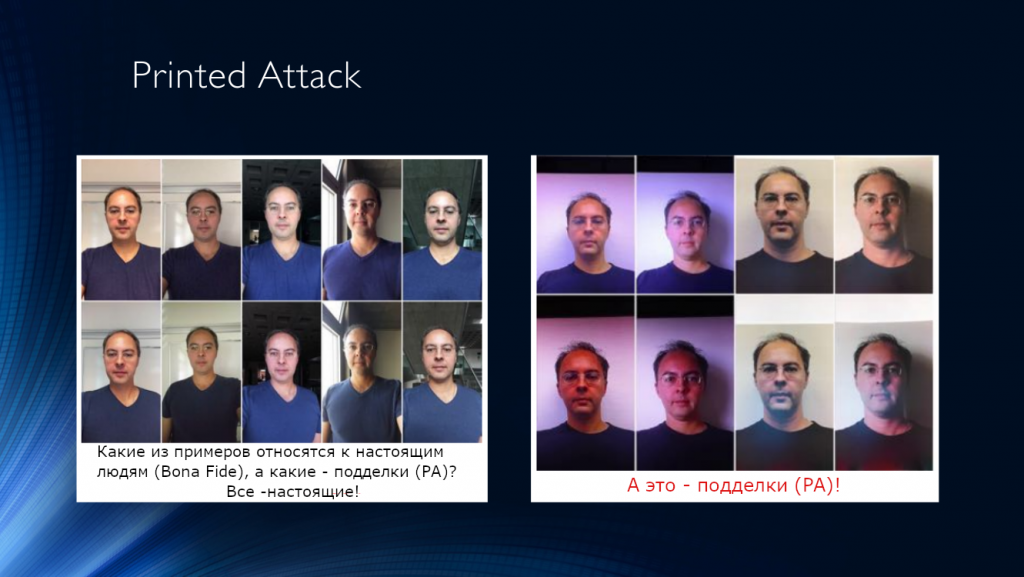

Bona Fide: Refers to a normal, legitimate input or behavior within the system — in other words, any interaction that is NOT an attack.

Presentation attack instrument: The artifact used in an attack, such as an artificially fabricated body part or a physical object intended to deceive the system.

Presentation attack detection: Methods used to automatically detect such attacks.

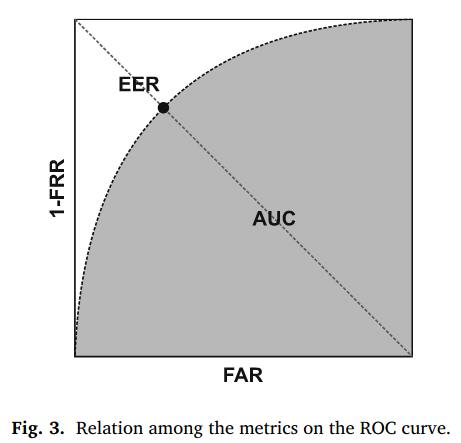

HTER Metric (Half Total Error Rate): HTER is used to evaluate the performance of an anti-spoofing system. It is calculated as the average of: FRR (False Rejection Rate): the rate at which authorized individuals are incorrectly rejected. FAR (False Acceptance Rate): the rate at which unauthorized individuals are incorrectly verified

HTER = (FAR+ FRR) / 2

In biometric systems, greater emphasis is typically placed on minimizing FAR in order to prevent unauthorized access. Significant progress has been made in this regard. However, reducing FAR often results in an inevitable increase in FRR — meaning legitimate users may be incorrectly classified as intruders.

If you want to reduce the number of phones thrown against the wall after the tenth consecutive rejection attempt, you should pay close attention to FRR.

EER Metric (Equal Error Rate): EER corresponds to the point on the ROC curve where FAR and FRR are equal. It is the FAR value at the intersection of the ROC curve and the line x+y=1x + y = 1x+y=1.

Types of Attacks

Let us now examine how attackers attempt to deceive recognition systems, supported by illustrative examples.

One of the most common spoofing techniques involves the use of masks. Attackers can directly impersonate another person by wearing a realistic facial mask and presenting it to an identification system. This type of attack is known as mask spoofing.

Another method is the printed attack, where a photograph of oneself or another person is printed on paper and presented to the camera.

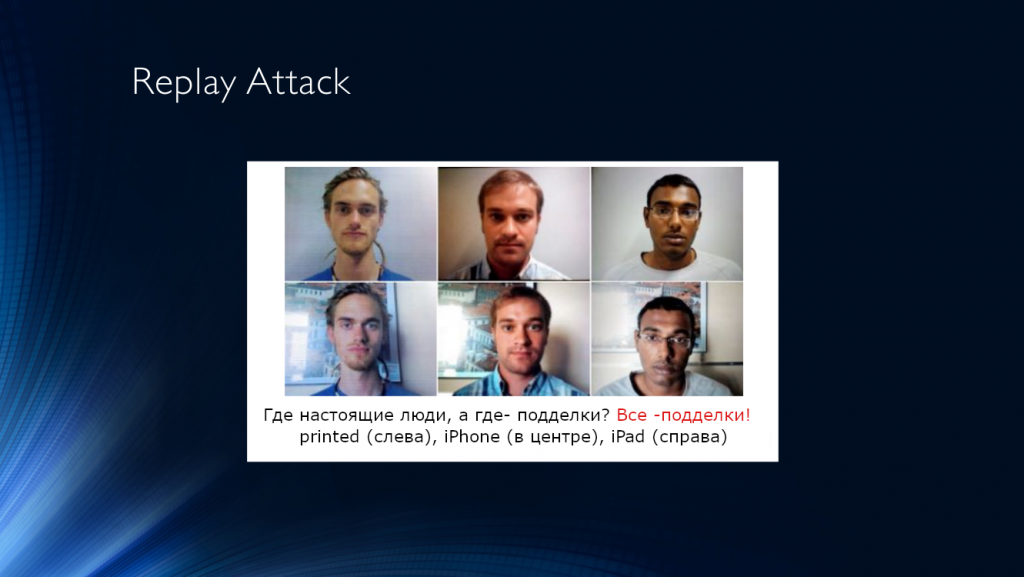

A more sophisticated method is the replay attack. In this case, the attacker presents a device screen to the camera, on which a previously recorded video of another person is played.

Although replay attacks may seem more complex in practice, this does not reduce their effectiveness. On the contrary, such attacks often have a higher probability of bypassing anti-spoofing systems.

This is because many existing face recognition systems rely on temporal analysis for spoof detection — tracking cues such as eye blinking, subtle head movements, facial expressions, or breathing patterns. All of these signals can be replicated through a pre-recorded video.

Application Areas

Wherever face recognition technology is deployed, anti-spoofing measures must also be implemented. Face spoofing and identity fraud are associated with — but not limited to — the following domains:

- Digital banking

- Identity verification at ATMs

- Forensic investigations

- Online interviews and examinations

- Retail crime prevention

- School surveillance systems

- Law enforcement

- Casino security

Traditional Anti-Spoofing Techniques

Face images that are replayed from a video or presented as printed photographs can be detected by analyzing distortion artifacts in image quality. In many cases, certain local patterns can even be identified visually.

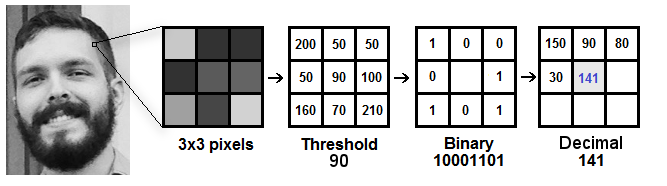

One commonly used approach involves computing Local Binary Patterns (LBP) over different regions of the detected face within a frame. LBP can be summarized as follows:

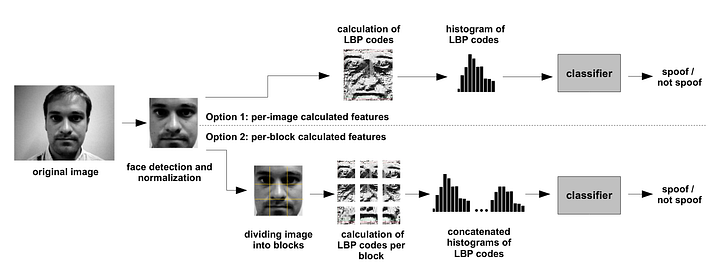

As one of the early image-based face anti-spoofing methods, the block diagram of the LBP-based spoof detection algorithm (2012) is shown below:

In this algorithm, LBP is computed for each pixel in the image. The eight neighboring pixels are sequentially compared with the central pixel. If a neighboring pixel has a greater value than the center pixel, it is assigned a value of 1; otherwise, it is assigned 0.

This process results in an 8-bit binary sequence for each pixel. Based on these sequences, a per-pixel histogram is generated and used as input to an SVM classifier.

The reported HTER of this method is approximately 15%, meaning that a significant portion of attacks can still bypass the system with relatively little effort. However, it is important to note that roughly 85% of threats are successfully filtered out.

The algorithm was evaluated on the IDIAP Replay-Attack dataset, which consists of 1,200 short videos from 50 participants and includes three types of attacks: printed attacks, mobile attacks, and high-resolution attacks.

Deep Learning-Based Anti-Spoofing Techniques

At a certain point, it became evident that the transition toward deep learning was inevitable. The so-called “deep learning revolution” soon extended to the field of face anti-spoofing.

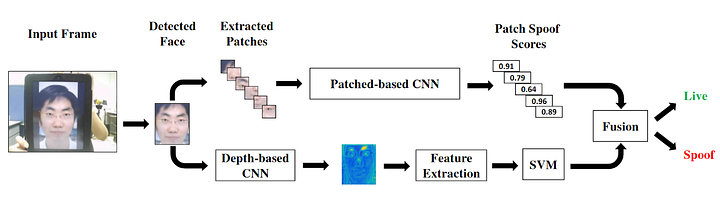

In a 2017 study, a neural network architecture based on patch-level analysis and depth estimation was proposed for spoof detection:

First, the face is detected within the input image. The detected face is then fed into a model consisting of two neural network branches.

The first branch extracts patches from the detected facial region and predicts a spoofing score for each patch. The second branch consists of a depth-based CNN model connected to a feature extractor. In this branch, a depth map of the face is estimated, and the extracted depth features are subsequently provided to an SVM-based classifier that performs binary classification (real vs. fake).

It is important to note that all proposed models/classifiers are trained separately; there is no end-to-end training with a shared loss function.

The method achieved HTER values of 2.27, 0.21, and 0.72 on the CASIA-FASD, MSU-USSA, and Replay-Attack datasets, respectively.

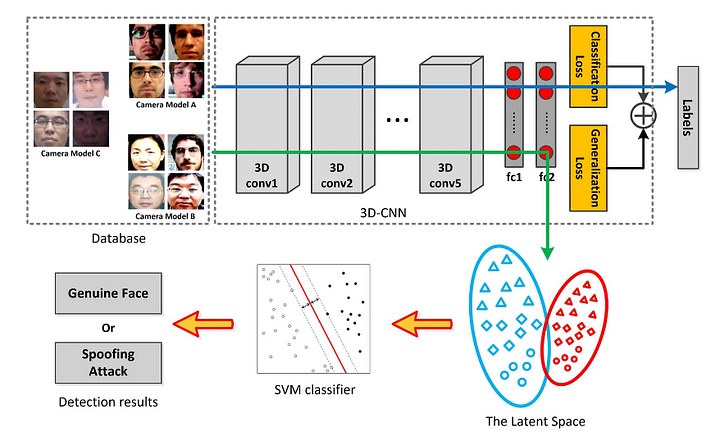

In a 2018 study, a 3D CNN-based architecture was proposed for spoof detection:

In this approach, 3D convolutional neural network layers are used to extract features from temporal image sequences. The loss function combines cross-entropy loss with Maximum Mean Discrepancy (MMD) to improve generalization. This method achieved an HTER of 1.2 on the Idiap dataset.

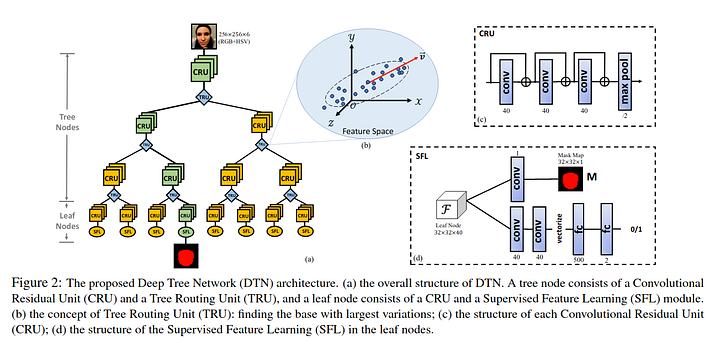

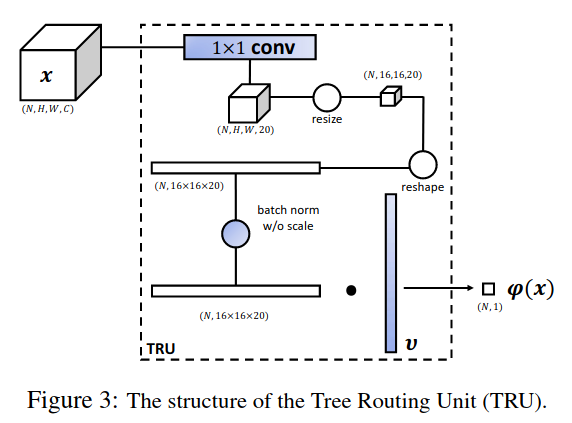

In 2019, a deep tree model was proposed to address the problem of zero-shot face anti-spoofing:

The authors also introduced a spoofing dataset (SiW-M) containing 12 different spoofing types — one of the most diverse datasets available at the time.

The proposed loss function consists of four components:

- Two supervised losses: binary cross-entropy for real/fake classification and mean error for mask supervision

- Two unsupervised losses: a routing loss encouraging a larger PCA basis and a unique loss designed to address imbalance routing issues in subgroup training

The method achieved an EER of 16.1 on the SiW-M dataset, which was considered state-of-the-art as of 2019.

Memorizing the Data Is Not the Solution

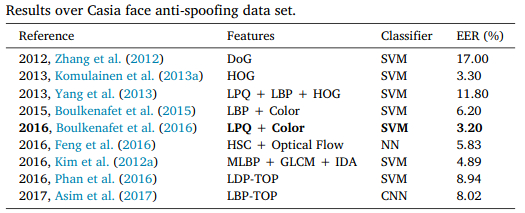

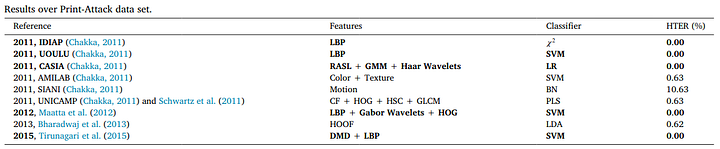

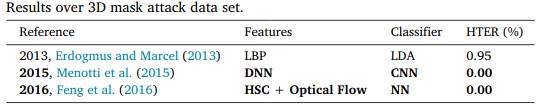

Fortunately, many public face anti-spoofing datasets are available, including CASIA, Idiap, Replay-Attack, and others. Let us take a look at some of the best-performing methods on existing datasets:

As shown in the tables above, when datasets are evaluated individually, the face anti-spoofing problem may appear to be largely solved. However, if you train a neural network on one dataset and attempt to evaluate it on another, the results are far less optimistic.

The results presented in Patel’s 2016 study demonstrate that even when sufficiently complex neural network architectures and seemingly reliable features (such as blinking or texture cues) are used, performance on unseen datasets remains unsatisfactory.

In the table above, a model trained on the USSA dataset was evaluated on the Replay-Attack and FASD datasets. Because existing datasets lack sufficient diversity, models trained on one dataset fail to generalize effectively to others.

Final Remarks

- Nearly all technologies developed for face recognition can, in one way or another, be adapted for face anti-spoofing. Advances in recognition systems often find parallel applications in attack analysis and spoof detection.

- However, there is a clear imbalance between the maturity levels of face recognition and face anti-spoofing technologies. Recognition systems have advanced significantly ahead of protection mechanisms. One of the main barriers to the widespread practical deployment of face recognition systems remains the lack of sufficiently reliable anti-spoofing solutions.

- In the literature, the majority of attention has been directed toward improving face recognition performance, while research on attack detection systems has progressed more slowly.

- Existing datasets have largely reached saturation. On five out of ten benchmark datasets, zero error rates have been reported. While this indicates substantial progress in anti-spoofing performance under controlled conditions, it does not reflect strong generalization capability. There is a clear need for new data and new experimental setups.

- With growing interest in the topic and the rapid adoption of face recognition technologies by major industry players, significant opportunities have emerged for ambitious research teams. At the architectural level, there remains a strong need for fundamentally new solutions.

References

- Souza, L., Oliveira, L., Pamplona, M., & Papa, J. (2018). How far did we get in face spoofing detection?. Engineering Applications of Artificial Intelligence, 72, 368–381.

- Chingovska, I., Anjos, A., & Marcel, S. (2012, September). On the effectiveness of local binary patterns in face anti-spoofing. In 2012 BIOSIG-proceedings of the international conference of biometrics special interest group (BIOSIG) (pp. 1–7). IEEE.

- Atoum, Y., Liu, Y., Jourabloo, A., & Liu, X. (2017, October). Face anti-spoofing using patch and depth-based CNNs. In 2017 IEEE International Joint Conference on Biometrics (IJCB) (pp. 319–328). IEEE.

- Li, H., He, P., Wang, S., Rocha, A., Jiang, X., & Kot, A. C. (2018). Learning generalized deep feature representation for face anti-spoofing. IEEE Transactions on Information Forensics and Security, 13(10), 2639–2652.

- Liu, Y., Stehouwer, J., Jourabloo, A., & Liu, X. (2019). Deep tree learning for zero-shot face anti-spoofing. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (pp. 4680–4689).

- Patel, K., Han, H., & Jain, A. K. (2016, October). Cross-database face antispoofing with robust feature representation. In Chinese Conference on Biometric Recognition (pp. 611–619). Springer, Cham.